The example introduces the package’s entity matching functionality using neural-symbolic learning. The goals of the example are (i) to introduce the available options, (ii) discuss some aspects of the underlying model logic, and (iii) contrast the neural-symbolic functionality to that of purely deep learning entity matching. For an introduction to basic concepts and conventions of the package one may consult the Entity Matching with Similarity Maps and Deep Learning example (henceforth Example 1). For the reasoning capabilities of the package, set the Reasoning example.

Preprocessing

We use the gaming data of Example 1. Similar to the deep learning case, the neural-symbolic functionality leverages similarity maps and expects the same structure of datasets as inputs. The concepts and requirements are detailed in Example 1, we abstain from detailing them here for brevity. In summary, our preprocessing stage constructs three datasets left, right, and matches, where left has 36 records, right has 39 records, and matches has the indices of the matching records in left and right.

matching_data <- fuzzy_games_example_data()

left <- matching_data$left

right <- matching_data$right

matches <- matching_data$matchesMatching Model Setup

For simplicity, we employ the similarity map we used in Example 1.

instructions <- list(

title = list("jaro_winkler"),

platform = list("levenshtein", "discrete"),

year = list("euclidean", "discrete"),

`developer~dev` = list("jaro")

)

similarity_map <- SimilarityMap(instructions)

show(similarity_map)## SimilarityMap[

## ('title', 'title', 'jaro_winkler')

## ('platform', 'platform', 'levenshtein')

## ('platform', 'platform', 'discrete')

## ('year', 'year', 'euclidean')

## ('year', 'year', 'discrete')

## ('developer', 'dev', 'jaro')]Matching models using neural-symbolic learning are constructed using the NSMatchingModel class. The constructor expects a similarity map object, exactly as in the pure deep learning case.

model <- NSMatchingModel(similarity_map)Although the exposed interface is similar to the deep learning model, the underlying class designs are different. The DLMatchingModel class inherits from the tensorflow.keras.Model class, while the NSMatchingModel uses a custom training loop. Nonetheless, the NSMatchingModel class exposes compile, fit, evaluate, predict, and suggest functions to ensure that the calling conventions in the user space are as consistent as possible between the two classes.

For instance, the compile method of the NSMatchingModel can be used to set the optimizer used during training.

Model Training

The model is fitted using the fit function (see the fit documentation for details). The underline training loop of the neural-symbolic model is custom. Training treats the Python field pair and record pair models as fuzzy logic predicates. It adjusts their parameters to satisfy the following logic. First, for every matching example, all the field predicates and the record predicate should be (fuzzily) true. This rule is motivated by the observations that true record matches should in principle constitute matches in all subsets of their associated fields. Second, at least one of the field predicates and the record predicate should by (fuzzily) false for every non matching example. This rule is motivated by the observation that non matches should be distinct in at least one of their associated fields, because if they are not, then, in principle, they constitute a match.

The fit function expects left, right, and matches data sets. As in the deep learning case, the mismatch_share parameter controls the ratio of counterexamples to matches. The parameter satisfiability_weight is a float value between 0 and 1 that controls the weight of the satisfiability loss in the total loss function. The default value is 1.0, i.e., the training process considers only the fuzzy logic axioms. A value of 0.0 means that only the binary cross entropy loss is considered, which essentially reduces the model to a deep learning model. Any value between 0 and 1 trains a hybrid model that balances the two losses.

Finally, logging can be customized by setting the parameters verbose and log_mod_n. The verbose parameter controls whether the training process prints the loss values at each epoch. The log_mod_n parameter controls the frequency of the logging. For instance, if log_mod_n = 5, the training process logs the loss values every 5 epochs. The default value is 1.

model |> fit(

left,

right,

matches,

epochs = 151L,

batch_size = 16L,

verbose = 1L,

log_mod_n = 10L

)## | Epoch | BCE | Recall | Precision | F1 | Sat |

## | 0 | 6.7524 | 0.0000 | nan | nan | 0.7414 |

## | 10 | 5.7395 | 0.0000 | nan | nan | 0.8205 |

## | 20 | 7.2079 | 0.0000 | nan | nan | 0.8408 |

## | 30 | 7.8617 | 0.0000 | nan | nan | 0.8445 |

## | 40 | 8.1429 | 0.0000 | nan | nan | 0.8465 |

## | 50 | 5.7348 | 0.0000 | nan | nan | 0.8511 |

## | 60 | 1.6810 | 1.0000 | 0.9750 | 0.9873 | 0.8931 |

## | 70 | 0.6223 | 1.0000 | 0.9750 | 0.9873 | 0.9211 |

## | 80 | 0.3391 | 1.0000 | 0.9750 | 0.9873 | 0.9333 |

## | 90 | 0.3114 | 1.0000 | 0.9750 | 0.9873 | 0.9368 |

## | 100 | 0.3164 | 1.0000 | 0.9750 | 0.9873 | 0.9381 |

## | 110 | 0.3274 | 1.0000 | 0.9750 | 0.9873 | 0.9390 |

## | 120 | 0.3399 | 1.0000 | 0.9750 | 0.9873 | 0.9394 |

## | 130 | 0.3524 | 1.0000 | 0.9750 | 0.9873 | 0.9397 |

## | 140 | 0.3655 | 1.0000 | 0.9750 | 0.9873 | 0.9399 |

## | 150 | 0.3775 | 1.0000 | 0.9750 | 0.9873 | 0.9400 |

## Training finished at Epoch 150 with DL loss 0.3775 and Sat 0.9400Predictions and Suggestions

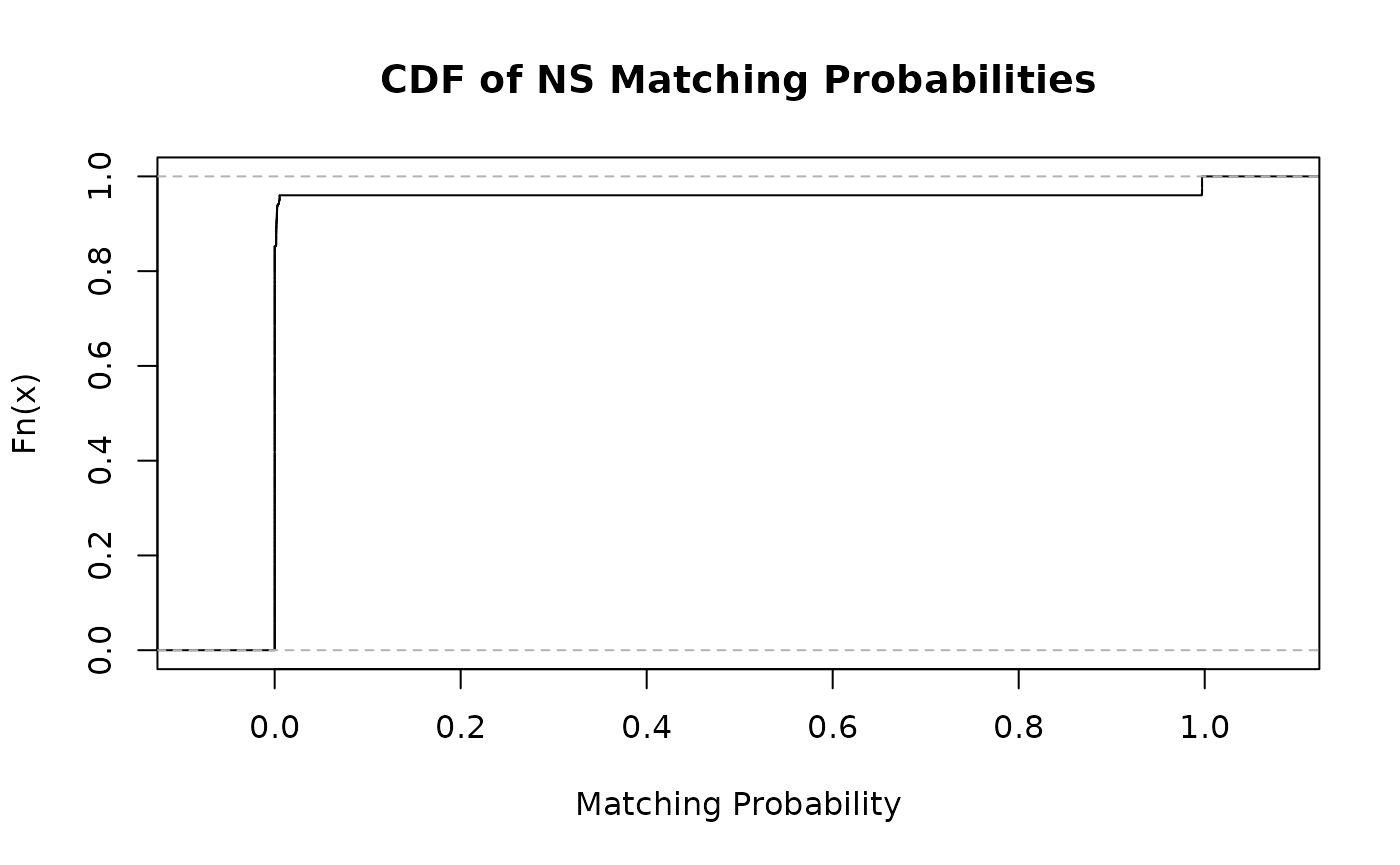

Matching predictions and suggestions follow the same calling conventions as corresponding deep learning member functions. Predictions can be obtained by calling predict and passing the left and right datasets. The returned prediction probabilities are stored in row-major order. First, the matching probabilities of the first row of left with all the rows of right are given. Then, the probabilities of the second row of left with all the rows of right are given, and so on. In total, the predict function returns a vector with rows equal to the product of the number of rows in the left and right data sets.

model |>

predict(left, right) |>

ecdf() |>

plot(main = "CDF of NS Matching Probabilities", xlab = "Matching Probability")

The suggest function also expects the left and right datasets as input. The function returns the top count matching predictions of the model for each row of the left dataset. The prediction probabilities of predict are grouped by the indices of the left dataset and sorted in descending order.

suggestions <- model |>

suggest(left, right, count = 3L)

suggestions["true_match"] <- apply(suggestions[, c(1, 2)], 1, function(r) {

any(r[1] == matches[, 1] & r[2] == matches[, 2])

})

duplicates <- matches[(nrow(matches) - 2):nrow(matches), c("left", "right")]

suggestions["duplicate"] <- apply(suggestions[, c(1, 2)], 1, function(r) {

any(r[1] == duplicates[1] & r[2] == duplicates[2])

})

rmarkdown::paged_table(suggestions, options = list(rows.print = 12))